Here but when: The archive across space and time 2020, United Kingdom, London

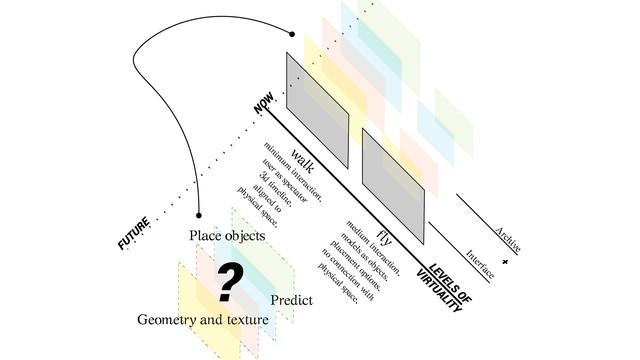

An interactive network of spatial information augmenting the present configuration with ones from the past through various levels of accessibility.

Can we use the real-time depiction as a dynamic tool for recording spatial memory through media traditionally used to represent space? Cultures have always been connected to technical life as humans have been extending their capabilities through technology. However, in the information society, interfaces, which enmesh our work in so-called real time, are dominating our everyday lives, while at the same time our online activity is constantly being stored in a dynamic archive. This research project explores the connection of archive to time and spatial memory. It focuses on the context of contemporary technical apparatuses of recording through 3d scanning, an example of a spatial digital replication tool.

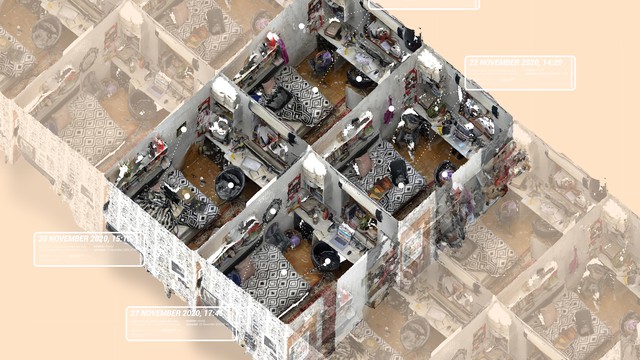

The project investigates the above question through the development of an archive of 3d scanning models which can be dynamically updated by data from users. An interactive network of spatial information is created, highlighting the importance of tool-based fusion, level of accessibility and experience. It outlines the qualities of organising and visualising data, in order to build, on a degree, collective memory. The 3D scans used in the interface consist of two basic types of information: position and colour, which after performing some basic operations are expressed as computational geometry and texture respectively. These features are directly linked to the time parameter as they are affected by lightning conditions, reflections and all changes which affect spatial visuality in general. As Derrida explains “ideal Objectivity is not fully constituted” or in other words in order information to be sustained through time it must be able to be incarnated in a transmissible form and be organised in a way to be readable and graspable. The compilation of a certain amount of information in one platform forms an archive, or in our case, collective knowledge based on an information distribution infrastructure. This interactive archive enhances human experience augmenting the present spatial configuration with the ones in the past. The 3D scans are overlayed in the physical environment and can be viewed in a sequence based on the date/time they were captured. It’s flexible for scale adjustments and general functionality. Apart from the room scale, other potential applications could be a museum for navigating through past exhibitions or a construction site exploring the progress of the work.

Details

Team members : Iliana Papadopoulou, Sathish Somasundaram, Ava Fatah gen Schieck (Supervisor)

Project leader(s) : Iliana Papadopoulou, Sathish Somasundaram

Company : The Bartlett School of Architecture, University College London (UCL)

Descriptions

Technical Concept : The immersive environment is developed using the gaming engine ‘Unity’ and Vuforia SDK, where the 3D scans are rendered as meshes and they are visualised on a sequence based on time periods. The interface is formed with an augmented reality mobile application which creates a gradual transition of virtuality between the time periods. The key part of its functionalities is the Time Machine, a two-dimensional graphic element which allows the user to navigate through older 3D scans of the space in the specific location. Additionally, the archive is accessible through “spots of information”. The discrete objects comprising all the 3D scans, have their own local sub-archive: organised and catalogued information about their past states. This local information is only visible when the user is near the objects.

Visual Concept : Comprehension of the connection between space and time is a potential challenge to overcome with the existing devices used to record and represent spatial information. A sequential representation of the space in relation to time, retrieved from an organized archive, can help us better comprehend this link. This can also unfold the possibility of rendering the memory in different perspectives to different users depending on their location inside the space and the time they recall from the archive.

Credits

The team

The team

The team

The team

The team

The team